a modern open source blockchain data stack

1.The challenge of modern blockchain data stack

There are several challenges that a modern blockchain indexing startup can face, including:

- Huge amounts of data. As the amount of data on the blockchain increases, the data index will need to be scaled up to handle the increased load and provide efficient access to the data. Consequently, it leads to higher storage costs, slow calculation of calculations and increased load on the database server.

- Complex data processing pipeline. Blockchain technology is complex, and building a comprehensive and reliable data index requires a deep understanding of the underlying data structures and algorithms. The diversity of blockchain implementations inherits it. Given specific examples, NFTs in Ethereum are typically created within smart contracts following the ERC721 and ERC1155 formats. In contrast, the implementation of those on Polkadot, for example, is usually built directly within the blockchain execution. These should be considered as NFTs and should be stored as such.

- Integration capabilities. To provide maximum value to users, a blockchain indexing solution may need to integrate the data index with other systems, such as analytics platforms or APIs. This is challenging and requires considerable effort in the architectural design.

As blockchain technology has become more widespread, the amount of data stored on the blockchain has increased. This is because more people are using the technology, and each transaction adds new data to the blockchain. In addition, blockchain technology has evolved from simple money transfer applications, such as those involving the use of Bitcoin, to more complex applications involving the implementation of business logic within smart contracts. These smart contracts can generate large amounts of data, contributing to increased complexity and size of the blockchain. Over time, this has led to a larger and more complex blockchain.

In this article, we review the evolution of Footprint Analytics’ technology architecture in stages as a case study to explore how the Iceberg-Trino technology stack addresses the challenges of data on the chain.

Footprint Analytics has indexed approximately 22 public blockchain data and 17 NFT marketplaces, 1,900 GameFi projects, and over 100,000 NFT collections in a semantic abstraction data layer. It is the most comprehensive blockchain data warehouse solution in the world.

Regardless of blockchain data, which includes over 20 billion rows of records of financial transactions, which data analysts often query. it is different from intrusion logs in traditional data warehouses.

We have experienced three major upgrades in recent months to meet the growing business demands:

2. Architecture 1.0 Bigquery

In the beginning of Footprint Analytics, we used Google Bigquery as our storage and search engine; Bigquery is a great product. It’s lightning fast, easy to use, and provides dynamic arithmetic power and a flexible UDF syntax that helps us get the job done quickly.

However, Bigquery also has several problems.

- Data is not compressed, resulting in high costs, especially when storing raw data from over 22 Footprint Analytics blockchains.

- Insufficient concurrency: Bigquery only supports 100 concurrent queries, which is unsuitable for Footprint Analytics high concurrency scenarios when serving many analysts and users.

- Lock in with Google Bigquery, which is a closed source product.

So we decided to explore other alternative architectures.

3. Architecture 2.0 OLAP

We were very interested in some of the OLAP products that had become very popular. The most attractive advantage of OLAP is its query response time, which typically takes subseconds to return search results for huge amounts of data, and it can also support thousands of concurrent queries.

We chose one of the best OLAP databases, Doris, to try it out. This engine works well. At some point, however, we soon ran into some other problems:

- Data types such as Array or JSON are not yet supported (November 2022). Arrays are a common type of data in some blockchains. For example, the subject field in evm logs. The fact that we cannot calculate on Array directly affects our ability to calculate many business calculations.

- Limited support for DBT, and for merger statements. These are common requirements for data engineers for ETL/ELT scenarios where we need to update some newly indexed data.

That said, we couldn’t use Doris for our entire data pipeline in production, so we tried to use Doris as an OLAP database to solve part of our problem in the data production pipeline, acting as a query engine and providing fast and high concurrency query capabilities.

Unfortunately, we couldn’t replace Bigquery with Doris, so we had to periodically sync data from Bigquery to Doris using it as a query engine. This synchronization process had several problems, one of which was that the update writes piled up quickly when the OLAP engine was busy serving queries to the front-end clients. Then the speed of the writing process was affected and synchronization took much longer and sometimes even became impossible to complete.

We realized that OLAP could solve several problems we face and could not become the turnkey solution of Footprint Analytics, especially for data processing pipeline. Our problem is bigger and more complex, and we can say that OLAP as a search engine alone was not enough for us.

4. Architecture 3.0 Iceberg + Trino

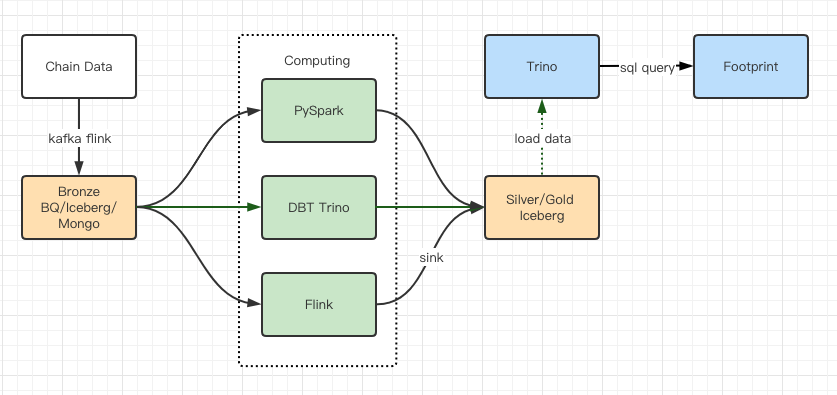

Welcome to Footprint Analytics architecture 3.0, a complete overhaul of the underlying architecture. We have redesigned the entire architecture from the ground up to divide the storage, computation and query of data into three distinct parts. Take lessons from the two previous architectures of Footprint Analytics and learn from the experiences of other successful big data projects such as Uber, Netflix and Databricks.

4.1. Introduction of the data lake

We first turned our attention to the data lake, a new type of data storage for both structured and unstructured data. Data Lake is perfect for on-chain data storage as the formats of on-chain data range widely from unstructured raw data to structured abstraction data that Footprint Analytics is known for. We expected to use data lake to solve the problem of data storage, and ideally it would also support mainstream data processing engines like Spark and Flink, so it wouldn’t hurt to integrate with different types of processing engines as Footprint Analytics evolves.

Iceberg integrates very well with Spark, Flink, Trino and other calculation engines and we can choose the most suitable calculation for each of our calculations. For example:

- For those who require complex computational logic, Spark will be the choice.

- Good for real-time calculation.

- For simple ETL tasks that can be performed using SQL, we use Trino.

4.2. Query engine

When Iceberg solved the storage and computation problems, we had to think about choosing a search engine. There are not many options available. The alternatives we considered were

The most important thing we considered before going deeper was that the future search engine had to be compatible with our current architecture.

- To support Bigquery as a data source

- To support DBT, which we depend on to produce many calculations

- To support the BI tool’s metabase

Based on the above, we chose Trino, which has very good support for Iceberg and the team was so responsive that we raised a bug, which was fixed the next day and released to the final version the following week. This was the best choice for the Footprint team, which also requires high implementation responsiveness.

4.3. Performance testing

Once we decided on our direction, we did a performance test on the Trino + Iceberg combo to see if it could meet our needs, and to our surprise, queries were incredibly fast.

Knowing that Presto + Hive has been the worst comparator for years in all the OLAP hype, the combination of Trino + Iceberg was completely confused.

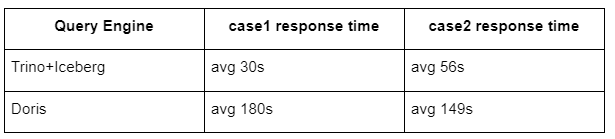

Here are the results of our tests.

case 1: join a large dataset

An 800GB table1 joins another 50GB table2 and performs complex business calculations

case2: use a large single table to do a distinct query

Test sql: select distinct (address) from table group by day

The Trino+Iceberg combination is about 3 times faster than Doris in the same configuration.

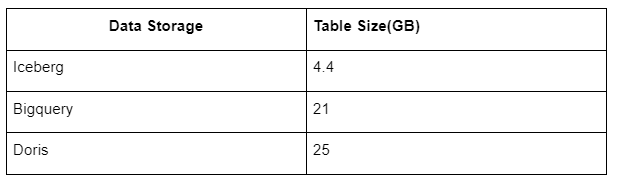

In addition, it is another surprise because Iceberg can use data formats like Parquet, ORC, etc., which will compress and store the data. Iceberg’s table storage only takes about 1/5 of the space of other data warehouses The storage size of the same table in the three databases is as follows:

Note: The tests above are examples we have encountered in actual production and are intended for reference only.

4.4. Upgrade effect

The performance test reports gave us enough performance that it took our team about 2 months to complete the migration and this is a diagram of our architecture after the upgrade.

- Several data engines match our different needs.

- Trino supports DBT, and can query Iceberg directly, so we no longer have to deal with data synchronization.

- The amazing performance of Trino + Iceberg allows us to open up all bronze data (raw data) to our users.

5. Summary

Since its launch in August 2021, the Footprint Analytics team has completed three architectural upgrades in less than a year and a half, thanks to its strong desire and determination to bring the benefits of the best database technology to its crypto users and solid execution of its implementation and upgrade underlying infrastructure and architecture.

The Footprint Analytics architecture upgrade 3.0 has bought a new experience for its users, allowing users from different backgrounds to gain insight into more diverse uses and applications:

- Built with the Metabase BI tool, Footprint makes it easier for analysts to access decoded data in the chain, explore with complete freedom of choice of tools (without code or hardcord), query the entire history and cross-examine datasets to gain insights in no time.

- Integrate both on-chain and off-chain data for analysis across web2 + web3;

- By building / querying metrics on top of Footprint’s business abstraction, analysts or developers save time on 80% of repetitive data processing work and focus on meaningful metrics, research and product solutions based on their business.

- Seamless experience from Footprint Web to REST API calls, all based on SQL

- Real-time alerts and actionable alerts on key signals to support investment decisions